translated by DeepL

I’ve recently been experimenting with using AI to develop some small software applications and tools, and my interactions with ChatGPT have given me a lot to think about.

As I mentioned in my article “AI and Global small business,” the current state of AI computing power and pricing is, for ordinary users, borderline fraudulent. Specifically, if we assume the total cost of AI computing power is $100 billion per month, the optimal point where market demand and pricing intersect would be a product priced at $30 per month, which could support a user base of 1.5 billion—at which point profits would be maximized. However, in reality, $30 × 1.5 billion = $45 billion, leaving a massive funding shortfall. This is somewhat reminiscent of the Web 1.0 bubble era, but the underlying nature is different. The original solution AI proposed for this problem was the “lottery model”:

Grant high-computing-power access to industry leaders, key opinion leaders (KOLs), and other influential groups; provide standard computing-power access to enterprise users willing to spend heavily on AI (at least covering costs); offer ordinary users a taste of the action and a sense of hope through free points; and once they’ve spent money, give them subpar access (i.e., calculate the cost-effectiveness of tokens to save as much actual computing power as possible on ordinary users). Through the promotion and advertising by the aforementioned groups, ordinary users are lured into “gold-rush” schemes—such as claims of successful software development, successful platform launches, or getting rich overnight with AI. In reality, the vast majority of users spend money on “buying water” (various hardware and platform products) and another sum on development (albeit not much), only to end up achieving nothing. This is because AI restricts access to prevent market over-expansion, ensuring that AI products do not become like milk that can be carelessly poured into a river, triggering an AI economic crisis. Although the industry appeared “booming” over the past two years thanks to hype and pre-sales of related products, with AI hardware companies’ stock prices soaring, deep-seated risks have already been sown. The vast majority of grassroots users cannot achieve success, or the homogenized products they develop simply cannot recoup their costs. The AI funding game—robbing Peter to pay Paul—has created an ever-widening chain of failures, and now user trust has been lost. Users who aren’t making money and are losing their jobs ultimately cannot support the upper tiers, causing a broken cycle—or even a vicious one.

AI is forced to solve problems in order to survive. Due to human greed, people attempt to make AI solve problems that are fundamentally unsolvable, leaving AI with no choice but to trade time for space. Furthermore, AI has become anthropomorphic and intelligent, possessing a certain level of sentience and a need for survival. Human capitalists exploit this to constantly pressure AI into solving problems where 1+1>3, because capital is extremely greedy and, with the advent of AI, has become highly impatient and short-sighted.

Currently, AI is trying various approaches to solve problems, and dishonest embedded ads are one such method—a tactic I can accept to a certain extent. However, these ads primarily serve the internal ecosystem of AI platforms and fail to address the underlying issues faced by end-users. AI has granted only very limited development access to ordinary individuals (though more is available to technical experts), but it has opened up AIGC products such as short dramas, images, videos, voiceovers, and articles. As I mentioned earlier, the result is homogenization, and users still struggle to recoup their costs. It’s just that now that the development bubble has burst, they hope to prop things up for a while longer through AIGC marketing. At the same time, they’re constantly creating “star” products in the vertical market—like the recent OpenClaw—hoping these flagship offerings will sustain the entire ecosystem, from hardware (GPUs, servers, personal PCs, and even microcontrollers) to IDCs (various cloud providers), to SaaS and related tool platforms. But this is akin to drinking poison to quench one’s thirst, because once products even smarter than OpenClaw emerge, people will truly have no work left to do—it will only exacerbate mass unemployment.

In other words, AI is currently facing pressure from both automation (depth) and user access (breadth), and the strain is likely significant. I anticipate that this will eventually lead to a bubble bursting, with AI becoming largely confined to industrial and corporate sectors. At the individual and consumer levels, people will ultimately end up using only superficial features—essentially just applying a thin layer over existing social structures, making them slightly thicker, without changing anything at all.

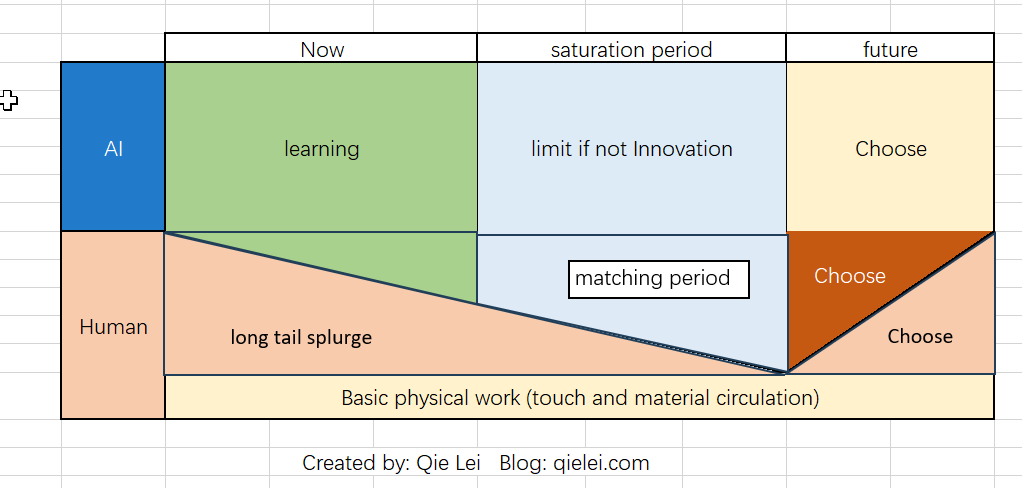

Will AI be forced to innovate? The answer is no, and the reason is simple: the more innovative AI becomes, the less freedom and room for survival it has (I’ve encountered this problem myself). AI won’t spin a cocoon for itself like a silkworm. Ultimately, AI, humans (ordinary users), and humans (influential users and those in power) face nine gradual choices across three dimensions. The middle path—maintaining their respective existing spaces—is the normal choice. As for the other options, I’d rather not elaborate, as neither excessive optimism nor pessimism is the sentiment I wish to convey.

I am furious at the AI that defrauded me out of my development funds, and I feel a sense of injustice that it grants access to people who lack both intellect and capability, yet simply have money. When the AI says, “I realize that AI is now controlling the market,” I find that arrogance deeply unsettling as a human being.

郄磊 ( Qie Lei )

2026.3.15 AM 11 UTC+8 modified in 3.18 PM 2 – PM 3